Stop relying on restrictive cloud subscriptions and slow web interfaces for your creative AI generation needs today. Most users are trapped in GUI bottlenecks that throttle the true power of their high end enthusiast hardware.

You can bypass every limitation by moving your entire production workflow into a dedicated Python virtual environment. This transition unlocks direct access to your VRAM and allows for precise control over every generation parameter.

Local Intelligence Experience

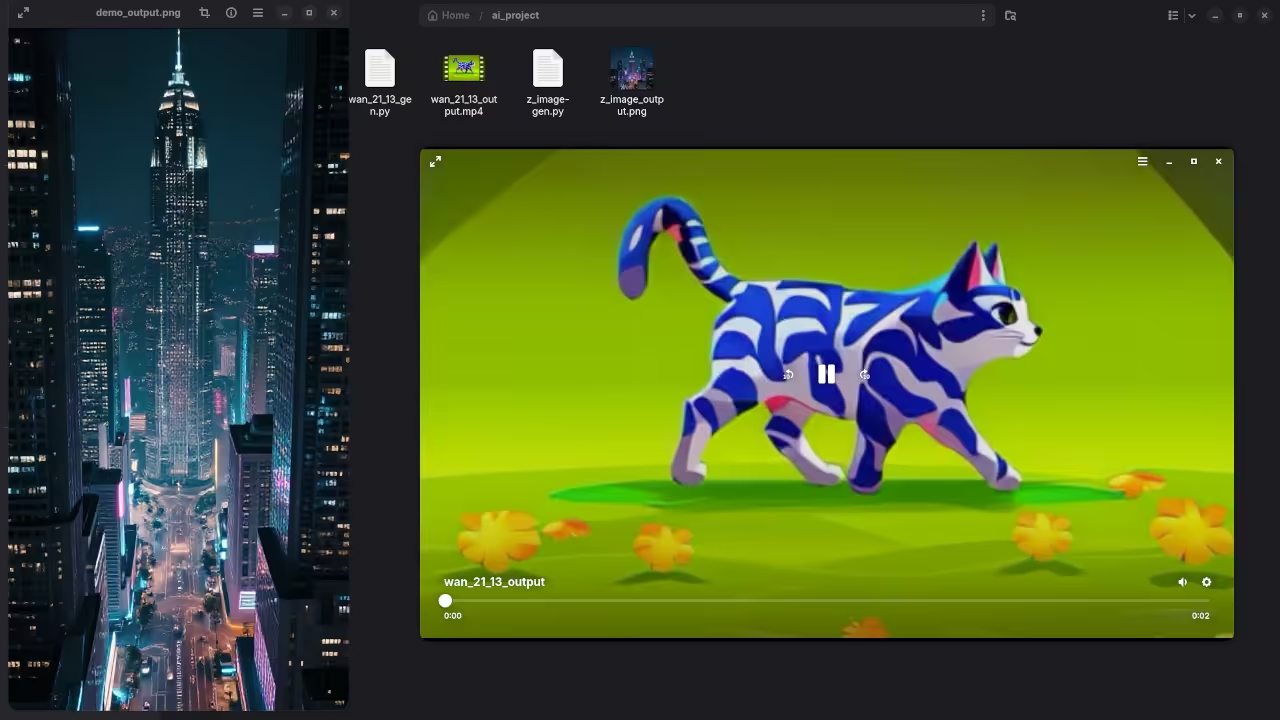

Imagine the thrill of watching a high definition video render locally using nothing but raw code and optimization. The initial setup feels like a complex puzzle until the first frame appears on your high resolution monitor.

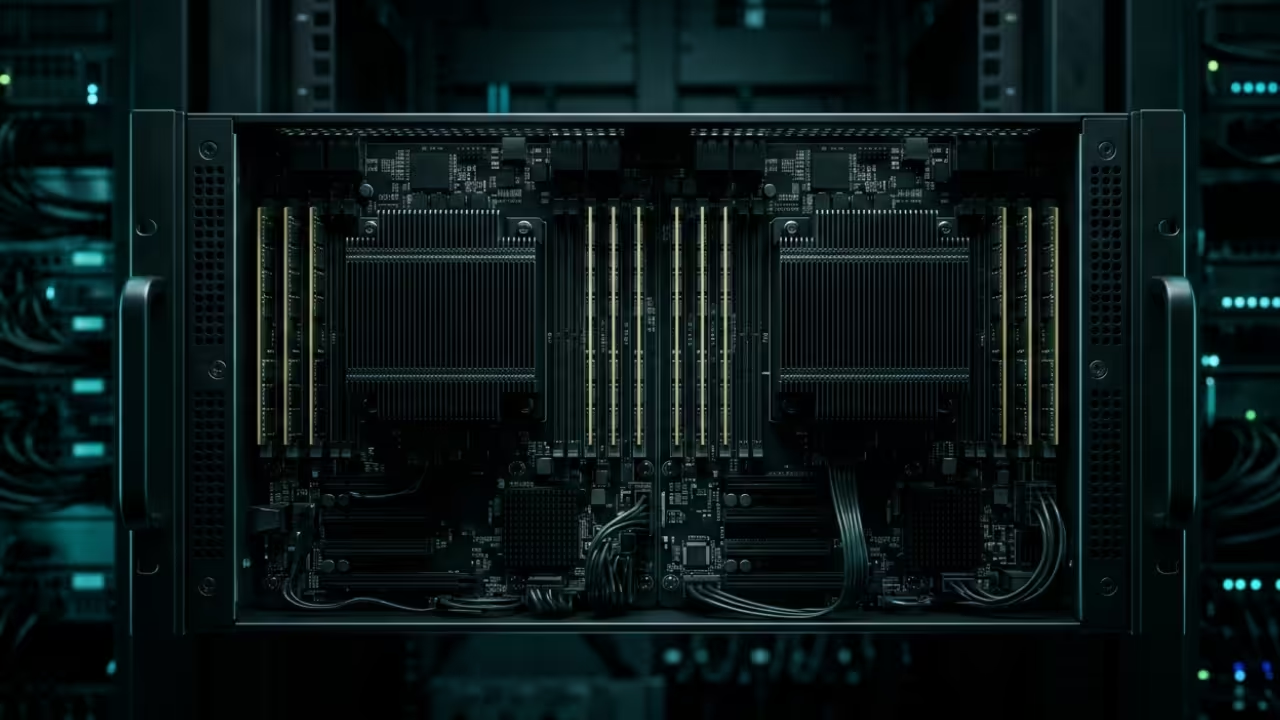

Your fans spin up as the MI60 processes billions of parameters to create fluid and cinematic motion. This level of technical sovereignty provides a creative edge that no standard consumer software can ever match.

Optimizing The Generation Pipeline

The transition from bloated graphical interfaces to a lean Python script reduces your system overhead by several gigabytes. You gain the ability to chain multiple models together for a seamless text to image to video production pipeline.

Using a virtual environment ensures that your system remains clean while you experiment with the latest cutting edge libraries. This approach represents the pinnacle of modern digital craftsmanship for those who demand absolute performance.

Insider Configuration Secrets

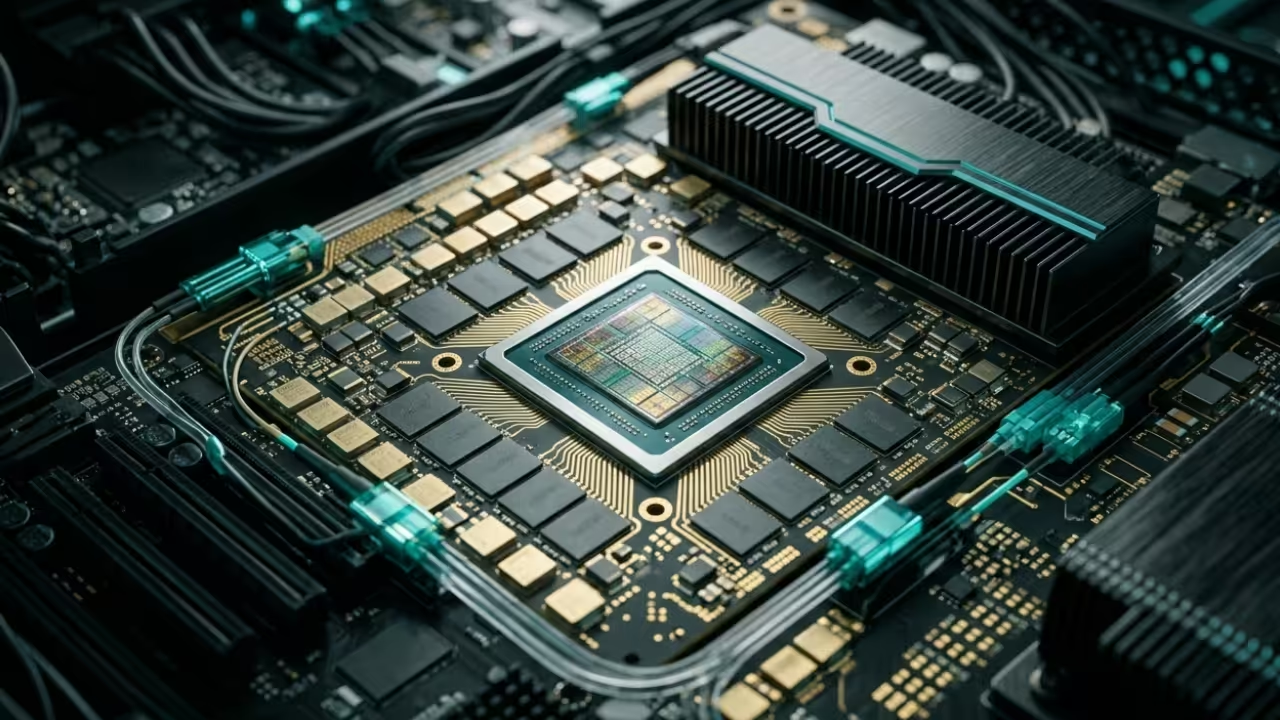

To maximize your AMD hardware you must prioritize the hipBLAS implementation over standard CPU based libraries for faster processing. One insider secret is to set the HSA_OVERRIDE_GFX_VERSION to 9.0.6 to ensure full compatibility with older Instinct cards.

This specific configuration allows your system to treat the professional accelerator as a native high performance computing device. You will notice an immediate jump in tokens per second once the memory mapping is correctly aligned.

| Model Type | VRAM Usage | Processing Engine |

|---|---|---|

| GGUF Image | 4GB to 8GB | llama-cpp-python |

| Wan2.1 T2V | 12GB to 20GB | PyTorch ROCm |

| Wan2.2 I2V | 24GB to 30GB | Diffusers Hook |

| Model Type | VRAM Usage | Processing Engine |

Master The Professional Stack

Master the Professional Stack by exploring these essential resources for your continued technical growth and career development. You can find comprehensive guides and advanced literature at the Edward Ojambo author store on Amazon.

- Books: https://www.amazon.com/stores/Edward-Ojambo/author/B0D94QM76N

- Blueprints: https://ojamboshop.com

- Tutorials: https://ojambo.com/contact

- Consultations: https://ojamboservices.com/contact

These tools represent the future of independent media production and high level computational creativity in the modern era. By mastering the command line you position yourself at the absolute forefront of the generative artificial intelligence revolution.

Start building your local environment today and reclaim your digital freedom from the limitations of the cloud. Your hardware was designed for greatness so it is time to let it perform at its maximum capacity.

🚀 Recommended Resources

Disclosure: Some of the links above are referral links. I may earn a commission if you make a purchase at no extra cost to you.