Tag: Machine Learning

-

Stop Trusting AI Code Secrets of the MI60 Hardware Audit Stack

Learn how to secure your infrastructure by deploying an autonomous AI code auditor on AMD hardware to catch critical vulnerabilities before they collapse your entire production environment toda

Written by

-

The Secret Weapon for Instant High Quality AI Voiceovers in ComfyUI

Discover how to integrate the ultra-lightweight Kokoro-82M model into your ComfyUI workflow to generate professional human-like speech for your creative projects instantly and efficiently.

Written by

-

Unleashing Local Video Intelligence The Python Command Line Secret

Unlock the power of local AI by running advanced video and image models using Python.

Written by

-

AMD AI Beginners Masterclass ROCm ComfyUI and Local Models Explained 2026 Guide

Master the AMD AI revolution with ROCm 7.2 and ComfyUI for professional local generation.

Written by

-

Understanding Local AI Architecture GGUF And Quantization

Introduction Local AI development is becoming very popular for Linux users.

Written by

-

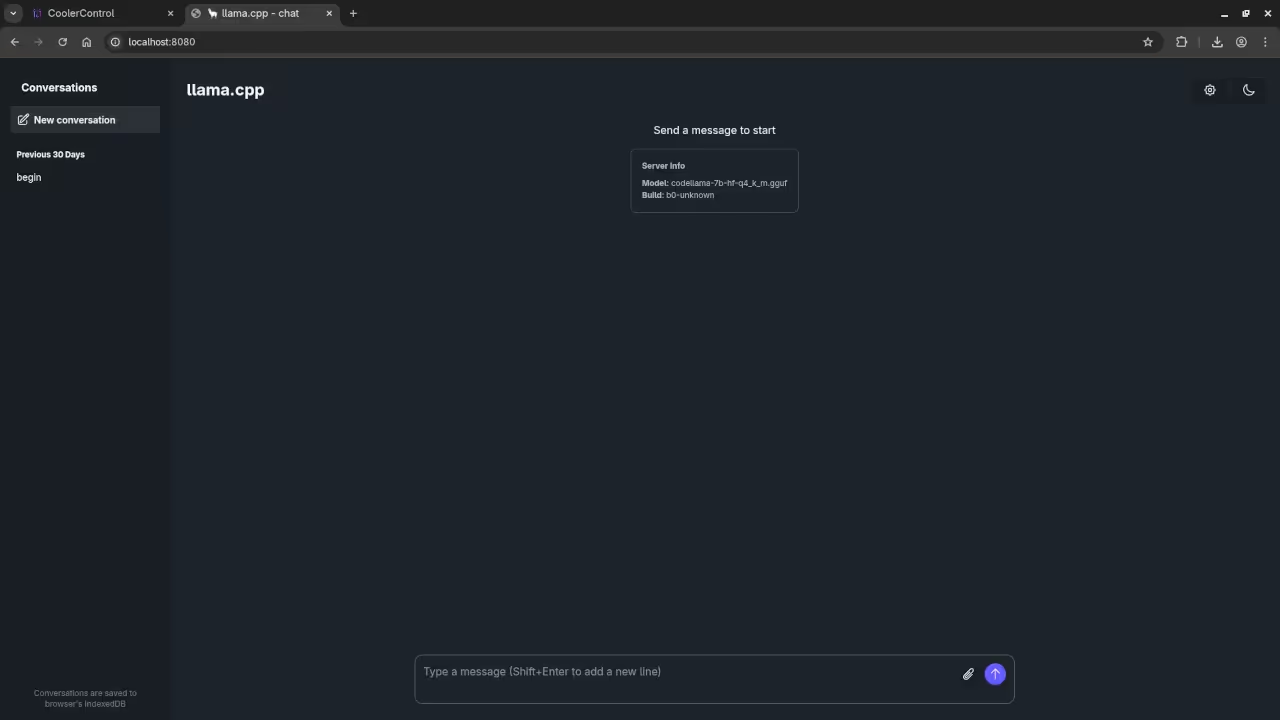

Review Generative AI codellama-7b-hf-q4_k_m.gguf Model

Steps to Configure Llama.cpp WebUI with Codellama 7B on Fedora 43 In this tutorial, we will go through the steps to configure the Llama.cpp WebUI with Codellama 7B running on a Linux system with an AMD Instinct Mi60 32GB HBM2 GPU.

Written by

-

Review Generative AI DeepSeek-R1 32B Model

How to Run Ollama with DeepSeek-R1 32B LLM on Fedora 42 – Open Source AI for Everyone Introduction In this post, we will walk through the steps to get Ollama running on your system, with a special focus on using the DeepSeek-R1 32B LLM.

Written by