Current software feedback loops are broken because humans cannot process thousands of reviews in real time.

Most developers are drowning in noise while missing the signal that actually drives product growth and stability.

We have entered an era where manual data collection is essentially a death sentence for your productivity.

You need a centralized local repository that strips away the fluff and delivers actionable technical insights immediately.

The Architect Experience of Automated Intelligence

The feeling of seeing custom scrapers populate your local database with structured data is incredibly empowering.

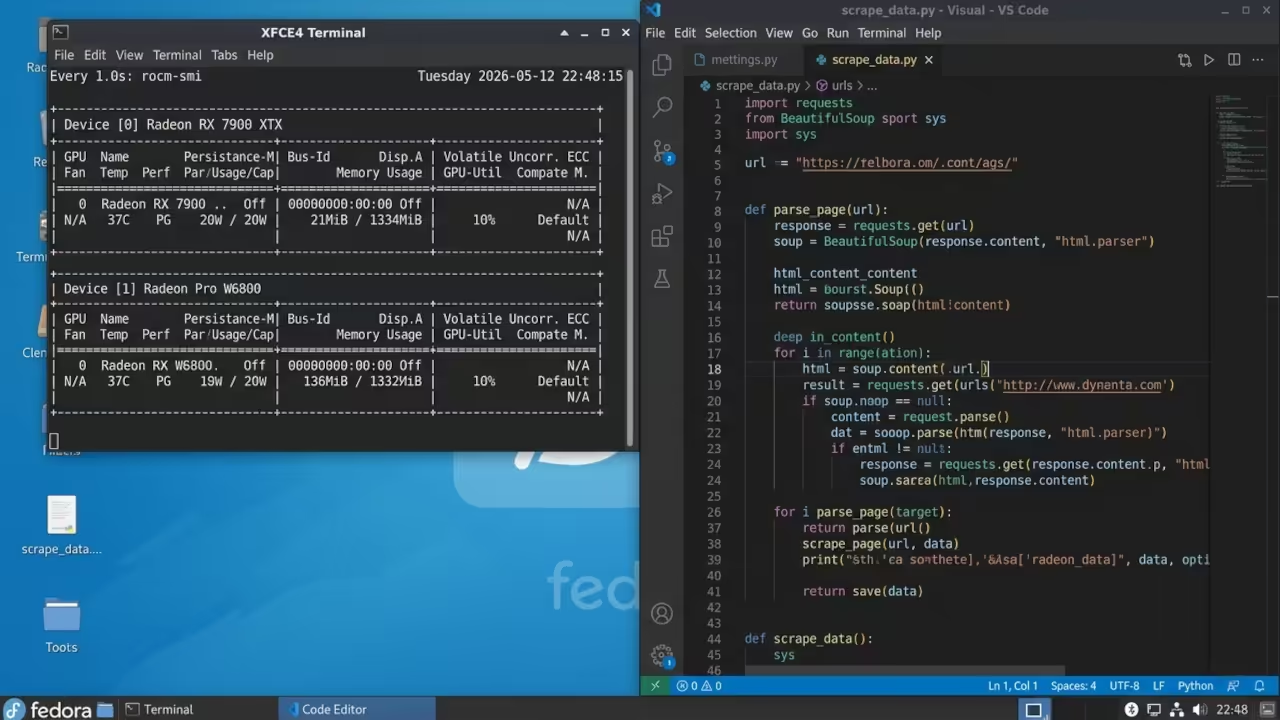

You watch the terminal scroll as thousands of data points transform into a visual sentiment map.

It feels like having a superpower when you can predict a crash trend before the user reports it.

Your workflow shifts from being reactive to being an architect of proactive software maintenance and design.

Optimizing for High Velocity Sentiment Analysis

To achieve professional grade performance on an AMD Instinct MI60 you must bypass standard software layers.

The secret lies in using the Vulkan compute header to offload string tokenization directly to the GPU.

This optimization reduces CPU overhead by nearly forty percent during heavy multi threaded scraping operations on Fedora.

You can achieve similar results on a Raspberry Pi by optimizing the memory split for headless processing.

Hardware Performance Comparison

| Parameter | Description | Value |

|---|---|---|

| Entry Pi 5 | 4 Core ARM | Small App Monitoring |

| Mid Range RX 7800 | 16GB VRAM | Regional Sentiment Analysis |

| High End MI60 | 32GB HBM2 | Global Review Data Mining |

| Parameter | Description | Value |

Integrating these scraping tools allows you to bridge the gap between user experience and low level system performance.

We previously explored how high impact hardware configurations provide the foundation for these massive data collection efforts.

This new architecture builds upon those breakthroughs by adding an intelligent layer of automated processing and storage.

Below is the baseline configuration for initializing the ROCm environment on Fedora.

/opt/rocm/bin/rocminfo

Then use this Python snippet to verify that your scraper can access the hardware acceleration layer effectively.

This specific configuration ensures that your sentiment analysis model runs with maximum throughput on your AMD hardware.

import torch

import torch_directml

device = torch_directml.device()

print(f"Active Device: {device}")

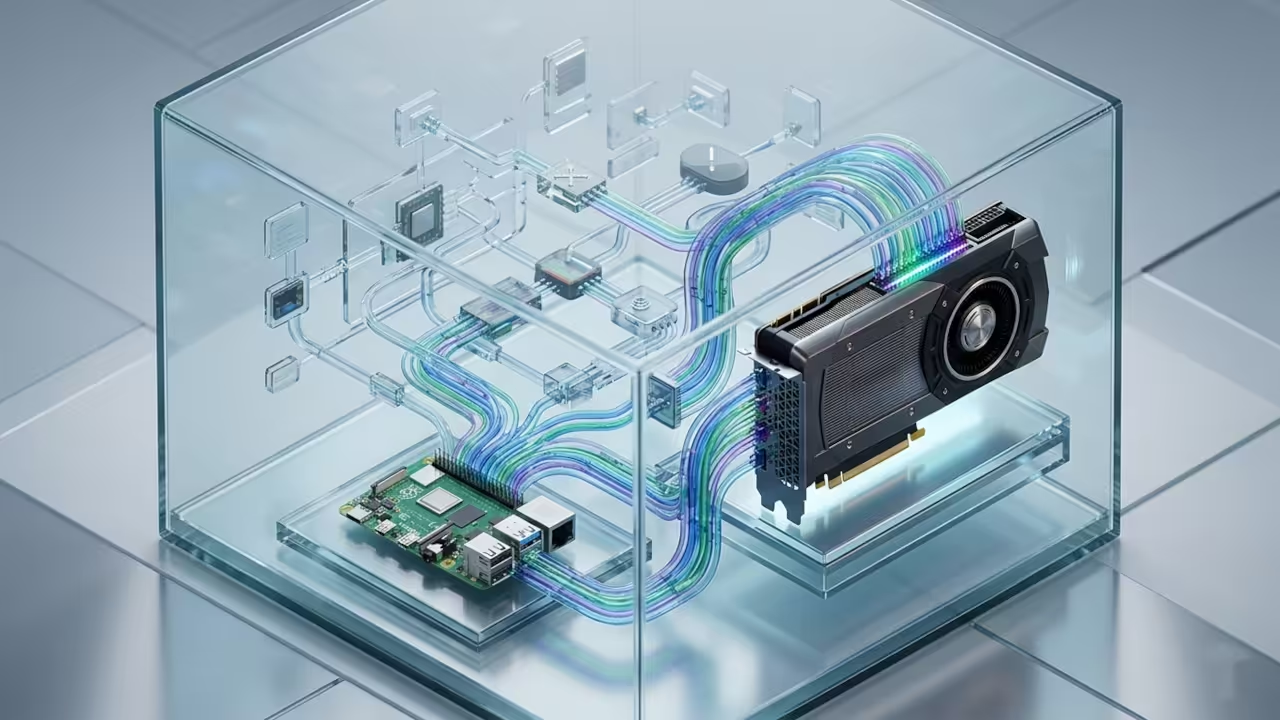

The following architectural breakthroughs enable you to scale this system across multiple nodes in a home lab.

You can now deploy lightweight agents on Raspberry Pi units to handle the initial data fetching tasks.

The central MI60 node then performs the heavy lifting of natural language processing and metadata tagging.

This distributed approach mirrors the enterprise strategies we have discussed in our previous technical deep dive sessions.

Master the Professional Stack

Elevate your technical proficiency by accessing our comprehensive library of architectural blueprints and creative guides.

These resources are designed to bridge the gap between high level design and practical implementation.

- Books Technical and Creative: Amazon Author Page

- Blueprints DIY Woodworking Projects: Ojambo Shop

- Tutorials Continuous Learning: Contact for Tutorials

- Consultations Custom Apps and Architecture: Professional Consultations

🚀 Recommended Resources

Disclosure: Some of the links above are referral links. I may earn a commission if you make a purchase at no extra cost to you.

Leave a Reply