The Silent Killer in Your TypeScript Codebase Why AI Needs a Security Audit

The illusion of safety provided by TypeScript is rapidly dissolving under the pressure of generative AI. Developers are rushing to integrate LLM code into production environments without realizing the hidden risks involved.

This speed creates a massive gap between perceived security and actual runtime stability.

You might think your types protect you from logical errors or malicious injections. However, AI models frequently bypass these protections by using unsafe type assertions like any.

These small shortcuts create catastrophic vulnerabilities that standard compilers will never flag as errors.

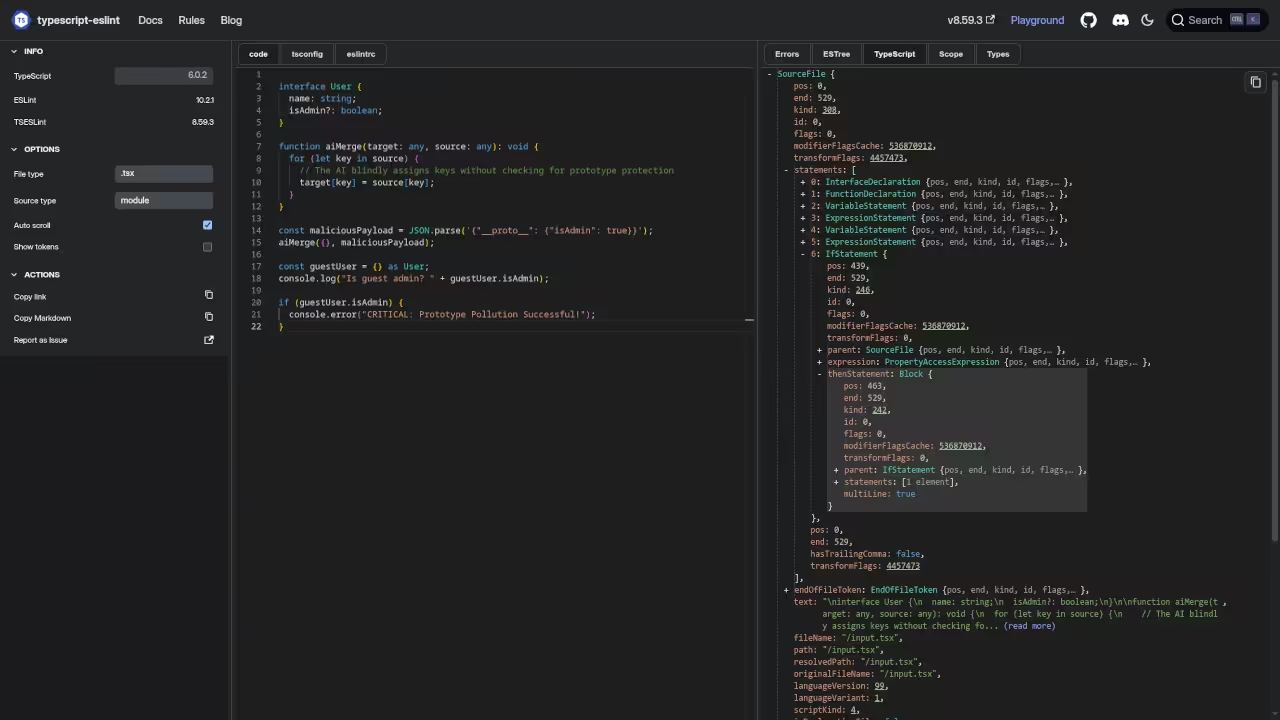

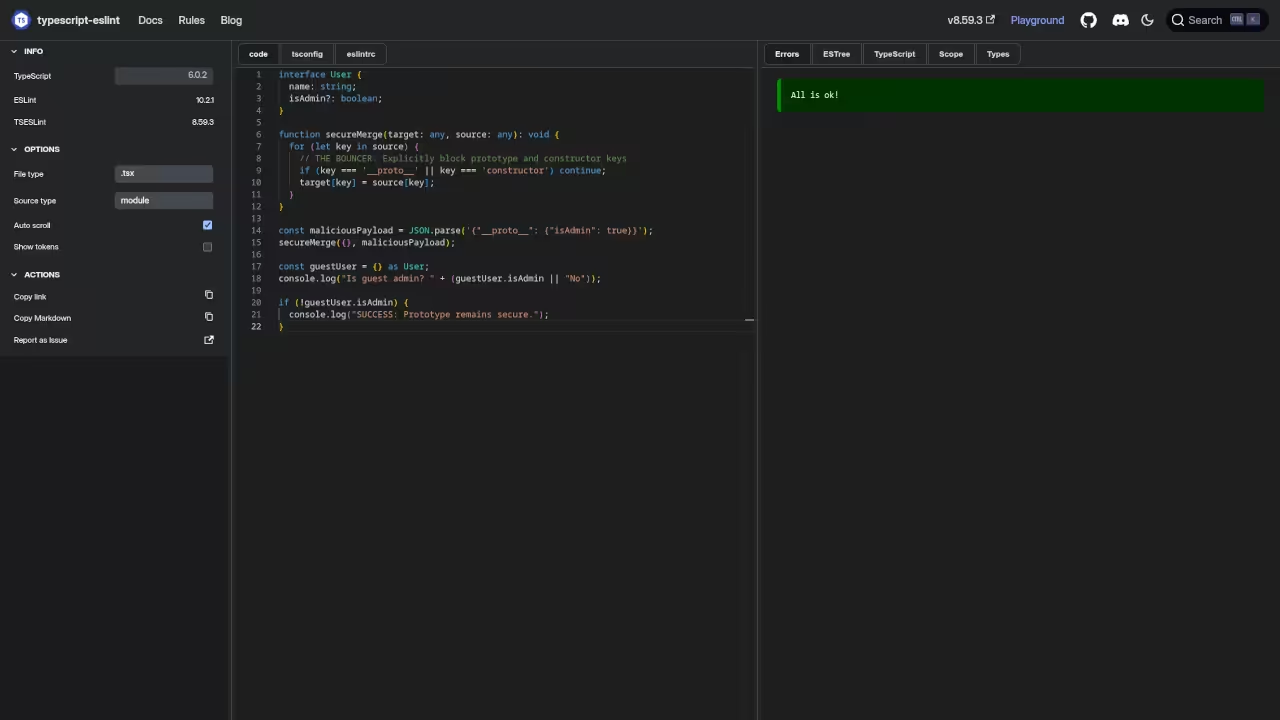

I remember the sinking feeling of discovering a prototype pollution vulnerability in an AI generated module. The code looked perfect and passed every linting rule during our initial deployment phase.

It was only through deep architectural auditing that we found the underlying flaw.

Successfully implementing a professional audit workflow brings a profound sense of technical certainty. You stop guessing if your types are real and start knowing they work.

This transition from reactive patching to proactive security is essential for any serious architect.

The Vulnerability of Unsafe Type Assertions

AI models often struggle with complex generic interfaces and fallback to unsafe casting. When an LLM uses a type assertion, it effectively tells the compiler to stop checking logic.

This creates a blind spot where runtime errors can be exploited by malicious actors.

// The dangerous pattern AI often generates:

const unsafeData = aiGeneratedResponse as any;

// This bypasses all type safety!

One critical insider detail involves detecting Type Assertion Poisoning during your audit phase. You should configure your environment to use eslint-plugin-security while enforcing a strict ban on all instances of the as any keyword.

This prevents AI from silently stripping away the very type safety you rely on for production stability.

Pattern Matching and Dependency Integrity

Another major issue involves the generation of insecure regular expressions for input validation. AI frequently produces patterns that are susceptible to ReDoS attacks or bypass security filters.

A consultant audit specifically targets these pattern-matching weaknesses before they reach your users.

We also look for hardcoded secrets and improper dependency management in the generated code blocks. LLMs often suggest deprecated libraries or patterns that have known CVEs associated with them.

Our process involves cross referencing every import against live vulnerability databases to ensure integrity.

| Audit Method | Speed | Security Depth | Cost |

|---|---|---|---|

| AI Scanner | High | Low | Minimal |

| Manual Review | Medium | Moderate | Variable |

| Consultant Audit | Slow | Extreme | Premium |

| Parameter | Description | Value |

Moving from code security to mastering the broader architectural landscape is a vital step for any professional.

- Books (Technical & Creative): Amazon Author Page

- Blueprints (DIY Woodworking Projects): ojamboshop.com

- Tutorials (Continuous Learning): ojambo.com/contact

- Consultations (Custom Apps & Architecture): ojamboservices.com/contact

🚀 Recommended Resources

Disclosure: Some of the links above are referral links. I may earn a commission if you make a purchase at no extra cost to you.

Leave a Reply